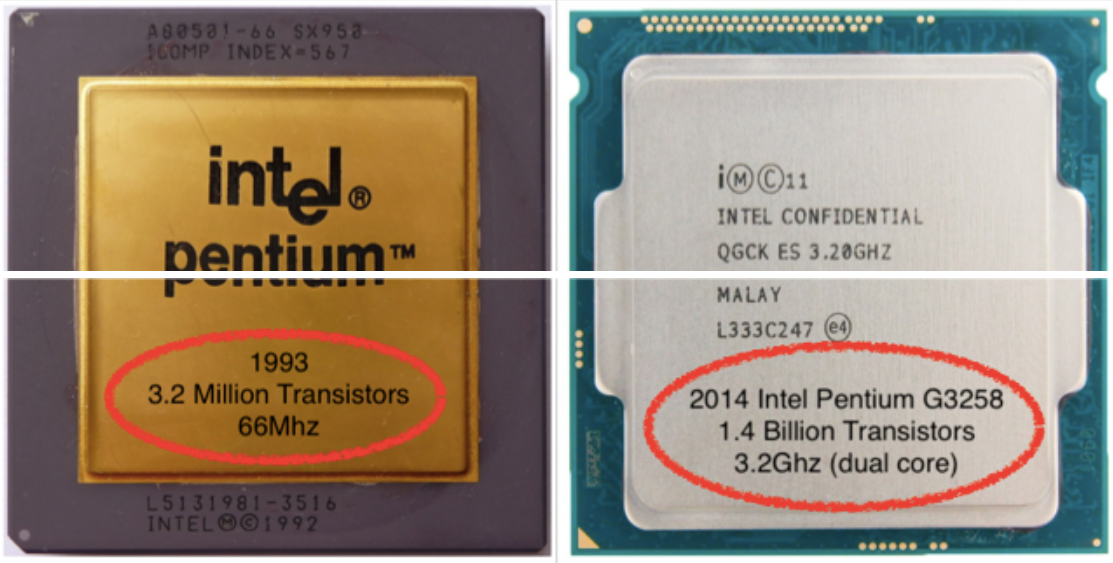

Today's processors contain billions of transistors in a tiny, constantly shrinking amount of space. All of these transistors produce heat. Power consumption for CPU's can vary wildly:

- 1000 watts on HPC servers

- 100 watts on your everyday desktop computer

- 50 watts on the average laptop

- 5 watts on a tablet

- 1 or 2 watts on a phone

- 100 milliwatts on an embedded system (like the Raspberry Pi this website is hosted on)

That's several orders of magnitude, just from those examples!

This is why I'm convinced that modern CPU design is the delicate art of placing a raging furnace on an area the size of your fingernail.

I remember my first computer and cooling it with no heatsink. It didn't even require the fancy CPU sockets we have today. Those days are long gone.

Large desktop computers affords cooling possibilities that laptops and mobile devices can only dream of. Not to mention, these computers are rarely at peak load. The more smaller the case, the higher performance; the more challenging your situation gets. This is probably why I like this video from Linus' team:

Crazy solutions

Still, there are plenty of ways to solve this problem for a desktop:

- Big, fancy heatsink (this one is inspired by a car!)

- Better thermal interface material (or TIM goop, as I like to call it)

- Watercooling

- Submerging your computer in mineral oil???

Servers however, are an absolute cooling nightmare. These are one of those situations where it is likely that your CPU is at full load more often than not; and using your fancy liquid metal isn't an option.

- A better heatsink might help (one made of copper, better thermal conductivity. Keep in mind that space is at a premium, so large ones are a big no-no)

- Sure, better goop can certainly help; but adds no tangible benefit.

It is probably at this stage when people suddenly resort to other (crazy old-school) solutions.

Namely, a duct.

Just make it so that all the 'fan-air' goes to the CPU!!

and some companies go as far to use computer simulations and modelling:

"APC Uses Airflow Simulation to Solve Data Center Cooling Problem"

Sometimes, you get so involved with solving a problem at hand that you forget to consider whether you are, in fact, solving the right problem.

Now of course, I don't mean to discredit APC's approach to this problem. However, I think we can all agree that modelling the airflow patterns of every node is a bit overkill - A conventional approach such as the hot-cold aisle system would have sufficed.

Your own personal furnace

Servers are an increasingly rare example of CPU performance heat and size tradeoffs; although at the other end of the spectrum - we have our phones and tablets making the same tradeoffs at a smaller scale. As designers get more and more obsessed with thin-ness, the SoC inside your phone manifests itself as a fingernail sized furnace, in your pockets.